This week on Hacker News: a WhatsApp CLI, a 20-year-old bug, and Gemma 4 on iPhone

Five dev-relevant projects and posts that took the HN frontpage this week: from a Go-powered WhatsApp CLI with offline search to on-device Gemma 4 inference.

Five things hit the Hacker News frontpage hard this week, and they share a shape: concrete work, no hype, a lot of it open source. Here’s what developers were actually starring, reading, and arguing about between April 11 and April 15, 2026.

We pulled these from the top of Hacker News, the LocalLLaMA subreddit, and linked commentary. Each entry below has a short pitch, the interesting technical detail, and a link.

The list

| Project / post | What it is | Why it trended |

|---|---|---|

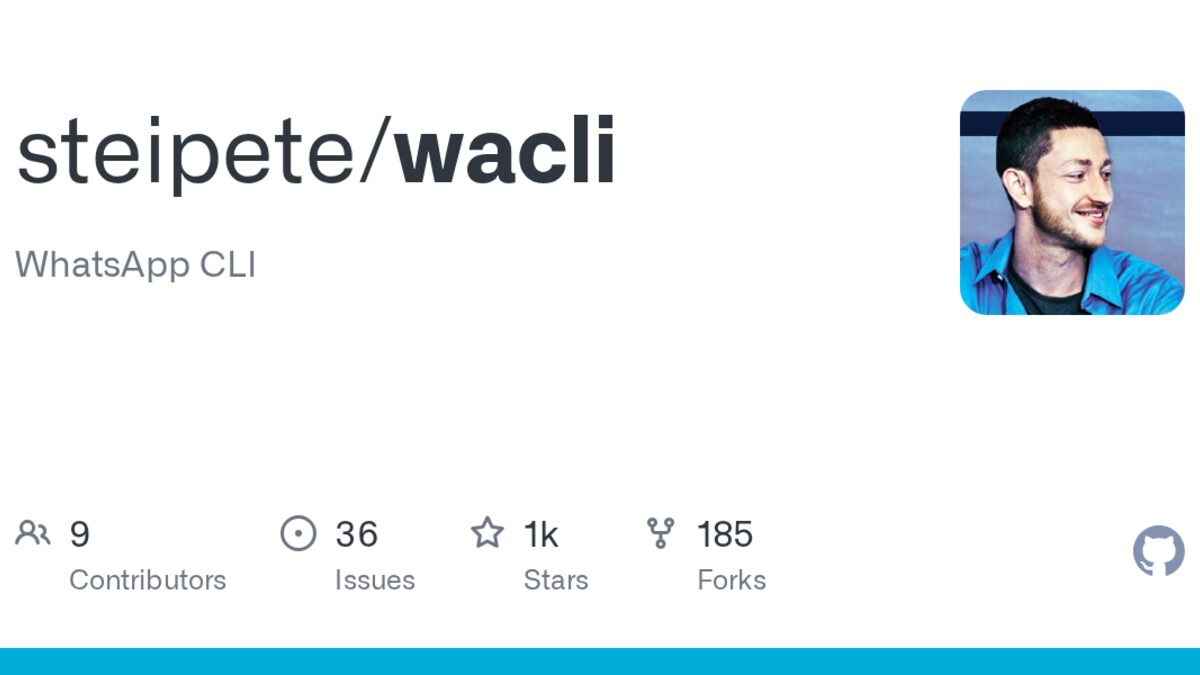

| wacli | Go-based WhatsApp CLI with offline search | 1.2k stars, 129 HN points |

| Dependency cooldowns | Blog post on supply-chain safety and free-riding | 129 HN points |

| Fixing an E16 bug | 20-year Linux window-manager bug finally fixed | 175 HN points |

| Gemma 4 on iPhone | Google’s open Gemma 4 running fully offline on A-series chips | 67 HN points |

| MiniMax-M2.7 license saga | Chinese AI lab’s open-weights license just got stricter | 184 LocalLLaMA points |

wacli: a WhatsApp CLI that actually works

wacli is a command-line WhatsApp client built on the whatsmeow Go library, with SQLite (FTS5) for local message storage and search. Install it with brew install steipete/tap/wacli on macOS, auth with wacli auth, and you’ve got grep-able message history plus programmatic send.

The commands are straightforward. wacli messages search "term" does offline full-text search against synced messages. wacli send text --to [number] --message "text" pushes a message without opening the app. wacli sync --follow keeps a long-running sync.

The author, Peter Steinberger, built this on top of the whatsmeow reverse-engineered WhatsApp-Web protocol library. That means no official WhatsApp API access; WhatsApp could break it any time. For a daily driver that’s a risk. For a scripted backup of your own messages, or a hacker-comfortable chat pipeline, it’s useful in a way Meta’s official clients refuse to be.

Catch: it’s third-party. WhatsApp may kick the client’s device ID if they detect abnormal use. Scope the use case accordingly.

Cal Paterson: dependency cooldowns are free-riding

The post title does the work: “Dependency cooldowns turn you into a free-rider”. Paterson argues that the popular pattern of pinning dependencies to versions that are N days or weeks old, in order to dodge supply-chain attacks, works only because most teams don’t do it. If everyone waited, nobody would catch the malicious release early. You’re shifting the attack-surface cost onto whoever updates fast enough to be exposed.

The counter-argument in the HN thread is that “someone else should take the risk” is a valid industry pattern, and the alternative (everyone running latest) is strictly worse. Both sides are right, which is why the post lit up. If your team has an “n-day cooldown” on npm install or pip install, it’s worth reading the full argument and deciding how you’d defend the policy.

Enlightenment E16: 20-year-old bug, real fix

iczelia.net’s writeup is a slow, clear walkthrough of finding and fixing a decades-old window-positioning bug in the E16 window manager. It’s the kind of post HN rewards: specific repro, minimal code, actual patch, honest about the dead-ends along the way.

Why this trended even though almost nobody still runs E16: developers respect bug archaeology. Understanding why a 2004 codebase does what it does, and producing a fix that doesn’t break the eight people still using it, is a harder problem than writing a fresh feature. The post also works as a tutorial on reading C window-manager code, which is a skill not a lot of new devs have practiced.

Gemma 4 runs offline on iPhone

Google released Gemma 4 with native iPhone inference support this week. That means the model runs fully on-device against the A17/A18 Neural Engine, no server call, no network required. The reported latency sits in the range where a consumer app could plausibly ship it.

For app developers, that’s the interesting part. On-device inference means you can ship AI features to your users without paying OpenAI or Anthropic per request, without worrying about a backend going down, and without leaving a user’s data in the cloud. The cost model changes completely. The ceiling on model quality is lower than whatever Claude 4.6 can do, but for summarization, rewriting, classification, and narrow task automation, Gemma 4 on-device clears the bar.

Watch for indie iOS apps shipping Gemma-4-backed features in the next 60 days. The rollout pattern from smaller Apple Intelligence integrations suggests that’s when the third-party SDK adoption kicks in.

MiniMax-M2.7: open-weights license tightens

The Chinese AI lab MiniMax quietly pushed a license change to their M2.7 model on Hugging Face this week. The new terms restrict commercial use more aggressively than the prior release. LocalLLaMA caught it fast and the thread ran 184 points deep.

A follow-up post clarifies that MiniMax’s Ryan Lee confirmed the team is still working on the license, and that “sale of products built by m2.7 is permitted.” So it’s not a full “Llama-style community license” clampdown, but it’s also not the open-weights license people assumed. If you’re deploying M2.7 commercially, read the current license, not the version you cloned last month.

This is the pattern to watch for all open-weights releases from non-Western labs this year: the legal text moves faster than the docs, and your risk is in the delta.

Our pick

Read: Cal Paterson on dependency cooldowns. The argument is uncomfortable in the right way, and whether you agree or not it’ll force your team to explicitly defend your current dependency policy instead of drifting with it.

Install: wacli if you run macOS and want scripted WhatsApp for yourself only. Avoid for production pipelines because of the third-party risk.

Bookmark: the Gemma 4 iPhone story. If you ship consumer apps, this rewrites your AI-feature cost model in the next release cycle.

TL;DR

- wacli: Go-based WhatsApp CLI with offline FTS5 search. Useful for yourself; risky for production.

- Cal Paterson: cooldown policies are free-riding on whoever updates fast. Read and decide.

- E16 bug fix: bug-archaeology tutorial masquerading as a patch. Worth the read.

- Gemma 4 on iPhone: on-device inference meaningfully changes the AI-feature cost model.

- MiniMax-M2.7 license tightened. If you deploy it commercially, re-read the current text.

Sources

- Wacli – WhatsApp CLI: sync, search, send — GitHub / steipete

- Dependency cooldowns turn you into a free-rider — Cal Paterson

- Fixing a 20-year-old bug in Enlightenment E16 — iczelia.net

- Google Gemma 4 Runs Natively on iPhone with Full Offline AI Inference — Gizmoweek

- MiniMax-M2.7 license update — Hugging Face